This took about 5 months of sporadic work to complete, and was written in C++ for Windows machines. It was comprised of a number of subsystems written to industry standards, some of which leveraging middleware, and all drawing from a wealth of theory.

The final gamut of subsystems included resource-management, 3D graphics, 2D physics, file I/O, audio, human-interface, profiling, and an events system to tie them together. You can find an explanation of each below.

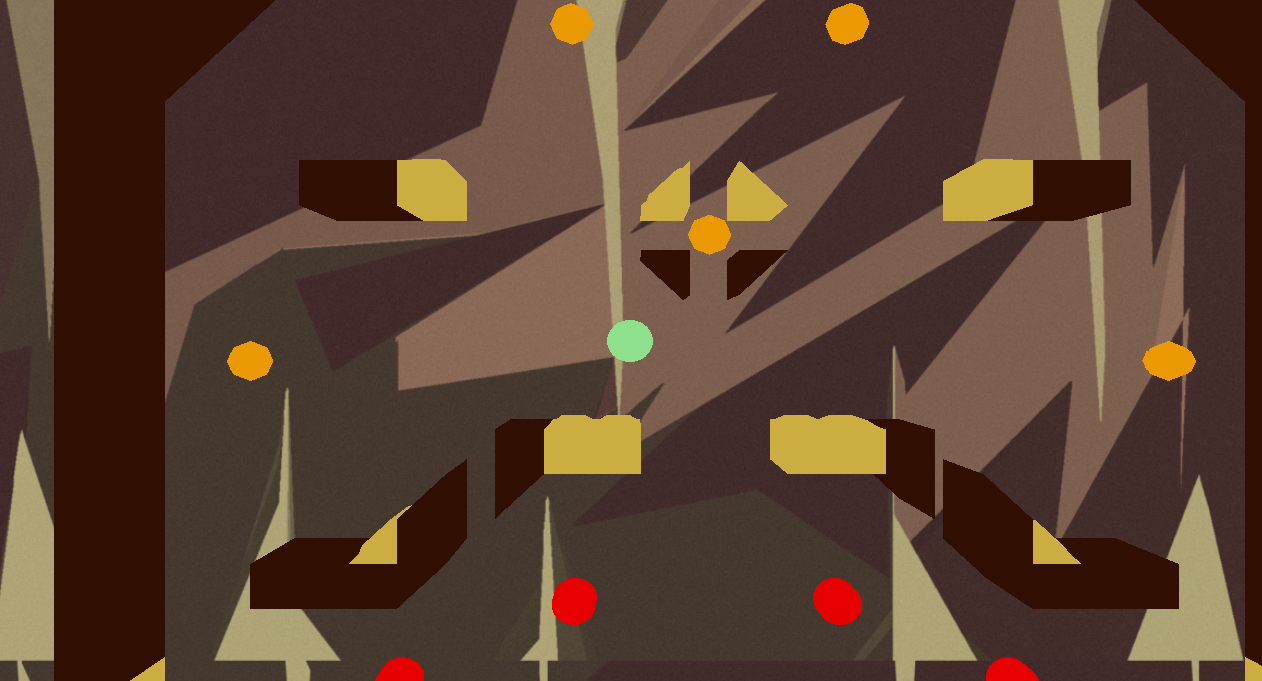

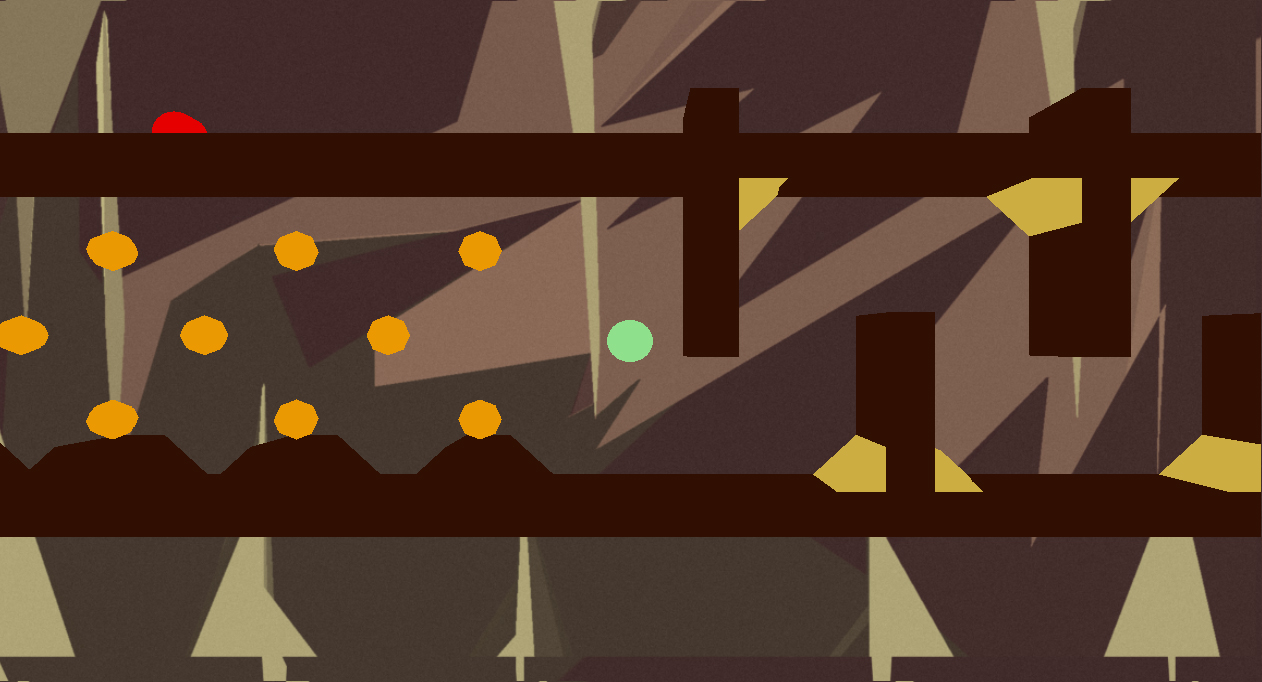

After demonstrating of the capabilities of the engine, I went on to add a gameplay subsystem to utilise it, producing a game called ‘Sinball’, an amalgam between a pinball arcade and brutal platformer.

Ultimately, I delivered a fully functioning and reasonably polished experience that was a lot of fun to play. The experience tested me on almost all of the skills I had learned during my course , and pushed me to find comfort in architecting complicated systems to unfamiliar archetypes – a long-haul divide-and-conquer with a rich reward at the end.

Jump, bounce and roll towards the water

Design

I had a play around in Unity and came up with the concept for a pinball game where the stage was built from tiles, with the Molyneux-esque twist being that you control the ball. I have a soft spot for Space Cadet and Pokémon Pinball, and the idea of aping them seemed both fun and achievable given the deadline. I could already visualise the data format, and I figured it would be more interesting to have to tweak the physics middleware past its out-of-the-box settings to mimic the elasticity and restitution of a pinball.

Technology Middleware

“Don’t reinvent the wheel” – Every programmer, at least once

‘Middleware’ is ready-made solutions libraries. Without some dependency management jazz like Maven to help me, it was a brute-force exercise in downloading the right .zip files from websites that look like they’d prefer to be left alone (just follow their tutorials).

Gah, I hadn’t expected setting up pathing and versioning for .dll files in Visual Studio would be so finicky! I ended up with some per-build (debug/release) config and a littered resources folder that, despite working, I’m still scared to touch to this day.

I opted to use the in-house graphics library we’d been developing alongside our studies, which relied on OpenGL, supported any regular old shaders and textures, and, pivotally, could be extended to read .obj files – meaning I could use Blender to design my stage. For physics, since Pinball is typically restricted to two-dimensions by a glass plate, I used the lightweight and well-documented Box2D. For audio, I used SFML, as it was one of the few free options with sensible requirements, capable of spatial sound. Lastly, for Xbox controller support, I used Microsoft’s XInput, which is handily shipped with Windows.

Building Blocks

The most atomic unit of a game engine is an ‘entity’. There are some common features of entities that are worth encapsulating for access across subsystems. Mine ended up storing their position in the ‘game world’ as a tuple inside of a GameObject, along with pointers to extra metadata per-system, such as PhysicsData (their position in the simulation), and GraphicsData (their shaders, textures, mesh). They also had some basic toggles, such as enabled, and visible. Thus, creating a custom Entity, such as a pinball, was as simple as bootstrapping its own fields, and ergo defining how it should look and act inside of its constructor.

Resource Management

Critical to any game engine is efficient use of memory. Using contiguously malloc’d memory that was strictly indexed necessitated overriding the new operator for many managed entities that would end up being spawned and re-used (e.g. game entities such as tiles).

I created the concept of a ResourceManager that held stores of segmented sizes. Each Store held bins with MemoryChunks of their states and pointers (free/allocated). These structs came in handy for clarity, and while I was hesitant while writing it, the resulting files were very simple.

Given more time, I would have liked to have also pre-loaded a manifest of static assets – as it stands, the engine hangs on first-load. Nonetheless, manage resources in this way increased the frame-rate drastically, and meant I didn’t have to worry about defragmentation. It also made the process of debugging easier, as snapshots of memory were more orderly.

Game Loop

The final step function ran inside a while loop in main looked like this:

bool Core::Step()

{

graphics.Update();

humanInterface.Update();

gameplay.Update();

if (!gameplay.IsPaused() && !gameplay.IsFinished())

{

physics.Update();

}

if (!gameplay.IsFinished())

{

audio.Update();

profiling.Update();

eventManager->RemoveFinishedEvents();

}

return !(gameplay.IsFinished() || graphics.IsFinished());

}

As you can see, nuances regarding exiting the program aside, each subsystem gets its own turn to update itself, reacting to and potentially creating new events.

Event System

In order for each subsystem to function interactively in the game loop, they each needed an abstract way of informing the others of changes. They all stored a reference to a global list of game entities, but needed something to act as a communication channel. To confound the problem, some entities implemented various listeners like OnCollision, inspired by the ‘components’ or ‘nodes’ in other popular game engines – the scope of these functions would be different.

So, using OOP, I created a hierarchy comprising of an EventManager that would store a queue of Event objects, whose payloads could be optionally extended by each sub-type and tagged for delivery to a particular other. Extending an abstract Subsystem class, each system would automatically consume events from the queue intended for it during their update step, and circumstantially produce new events for the queue. These events were mapped to internal handler functions using lambdas. Entities themselves could also implement optional interfaces exposing handling methods for certain scenarios, and in them, also produce new events.

It is worth noting that the process of development here was very iterative – I started by seeing if I could press the W key, make something somewhere respond by shouting a message to the console, and then built from there

To expound, take the case of the pinball hitting a wall. The physics system would poll its middleware to detect a collision, and then call a handler on the pinball entity:

if (collisionDetected) // Simplified!

{

Collider * c = dynamic_cast(entityA);

if (c != nullptr)

{

c->OnCollisionStart(entityB);

}

}

Inside of its handler, it would, among other things, register a PlaySoundEvent with the EventManager:

void PinballEntity::OnCollisionStart(Entity * e)

{

eventManager->AddEvent(new PlaySoundEvent("./Resources/Audio/collide-wall.wav",

gameObject->x, gameObject->y));

}

The PlaySoundEvent was hard-coded with the Audio system as the sole recipient. On the other side, the event would be handled in the audio system by mapping to a function, and acted on in the super call to update.

Audio::Audio(EventManager * eventManager) :

Subsystem("Audio", Event::AUDIO, eventManager)

{

eventMap[Event::AUDIO_PLAY_SOUND] = [this](Event * e) { HandlePlaySoundEvent(e); };

}

This was a streamlined process very similar to pub/sub, which meant the limitations of the engine’s communication were furthermore only defined by how many different handlers of event types there were. A key-press inside the human-interface system created an InputEvent that the gameplay system transmuted into a MoveEvent for the physics system to process, etc. Although it relied on the game-loop to operate in a logical order (as it is not multi-threaded), it allowed each subsystem to remain decoupled from the implementation detail of the others.

Physics

Technically, the final game didn’t need graphics. I’d have gotten pretty poor feedback, I’m sure, but the simulation still functions fine without a window. Indeed, it did for a time while I was fleshing things out. I had to set the timestep in such a way that it used delta time properly, and balance the number of calculation passes against it (too many -> too slow, too few -> too unpredictable).

Aside from digging through header files, it also took some time to figure out the relative scale between the physics-representation of space and the graphical representation of space. A red-herring, I originally set the force coefficient so disproportionally low that it appeared as though it wasn’t working at all; it took my friend determinedly leaning on the keys for a solid 5 minutes as a pink box eked its way off my platform to convince me otherwise.

I also came across a disparity between the angle of rotation of an object between the physics and graphics systems – one was using radians, and one was not, resulting in some weird spinning cubes. If you’re interested, the the Math library in C++ defines a RadToDeg function, or simply do r × 180/π.

Graphics

I ended up using a small library called tinyObj to load my meshes. I used the z-axis and some textured quads acting as backgrounds to create a parallax effect. The heavy-lifting here was accomplished in previous projects, where you can find more detail.

Human Interface

Everyone likes to kick-back with a controller now and then. There’s something about adding different kinds of input that gives me a real science-y vibe; I’m only ever a few pints away from adding baguette-support to my engine.

I defined a Controller interface with some more ambiguous types of inputs – ‘up’, ‘enter’, ‘primary’, ‘secondary’. This helped glue the Keyboard and XboxController implementations together, and meant I could map multiple redundant keys (e.g. W, ↑) together for flexibility. The actual system itself polls each connected controller every update, checking for connection/disconnection, and to see if a button had been pressed. I programmed a state map for each input, so it was possible to determine if something had been ‘held’ versus ‘pressed’, and thanks to the aforementioned event payloads analogue input was simply a matter of sending along the extent at which it had been pushed in each direction between 0 and 1, to be used as a multiplier against the pinball’s movement-speed.

File I/O

Surprisingly, this was my favourite to implement. I enjoy toy-problems, and deciding how to represent the game world felt just like one. There’s no real right-or-wrong here. Some people use in-memory database-dumps (like SQLite), others use more standard interchange formats (like JSON, YML), and some just use an ASCII file and call it dot-whatever-they-like. Guess which one I chose?

My level files ended up taking the form of a space-delimited array of integers. The first two represented the dimensions of the level. The rest were the stage itself, numbers corresponding to different children of TileEntity. Typing it out using carriage returns actually helped visualise the stage.

I read the data files into a Level struct that stores a list of TileEntity. Given more time, it may have been wiser to incorporate the tiles into a LevelEntity, to make the scene-graph less esoteric.

To cap it off, I added a WriteToFile function in order to save log output when…

Profiling

Decidedly the least sexy, but nevertheless important, profiling is a means to measure the performance of a program. Timing the update steps of each subsystem, and keeping a track of the frame-rate seemed to be the most valuable metrics, as they indicated the metaphorical horse-power of my engine.

In a similar vein, I also implemented a basic static logger that supported levels, prefixes and timestamps (like browser dev-tools). This helped during debugging, as each module knew its own name, and I could create a clearer picture of a sequence of actions quicker than trawling over a stack-trace or stepping through breakpoints.

In the end, I decided to allow toggling the profiler on/off at run-time, with mechanisms to enable/disable logging to both the console and local filesystem. Ironically, spewing so many messages to the console actually hampered the performance of the program, creating a self-defeating cycle, so I have some further investigation to do there. I forgot to set it to false by default for release, with the moral to that story being that nothing is ever 100% done, no matter how much time you spend perfecting it.

Audio

Often overlooked, there’s a lot more nuance to audio implementation than meets the eye. It’s like shopping for a washing machine – how many kg capacity are you after? How many modes do you need? What rpm spin-speed? Energy rating? Warranty? Monthly maintenance add-on?

On the surface, I wanted to play some sounds, so I downloaded a license free pinball SFX pack. To my horror, when pressing play I was greeted with a cacophony of undulating, grating explosions. I’d programmed the sound event to trigger every collision, but with the ball rolling across a flat surface, it was technically colliding every frame. I fixed this by adding a state to represent the ball either touching a surface or not, and an audio timeout, but I fear I may never be the same. It may be wise to implement some sort of max-polyphony.

Another challenge was music. Different to SFX, background tracks provide great ambience at the cost of much higher file size – therefore, it’s better to stream them in. I had to add functionality to loop them, and support muting all sounds just-in-case.

Gameplay

With a proof-of-concept of the engine’s core functionality, I set about the most rewarding leg of the journey. Having been building towards one specific game, I already had a strong foundation – an untextured ball on an untextured, simple stage, being moved by the keyboard.

First, I decided on all of the different types of tiles, based on images of real pinball machines:

- Bumper (propels you in back)

- Flipper (propels you in a direction)

- Hole (teleports you)

- Wall (sits very still)

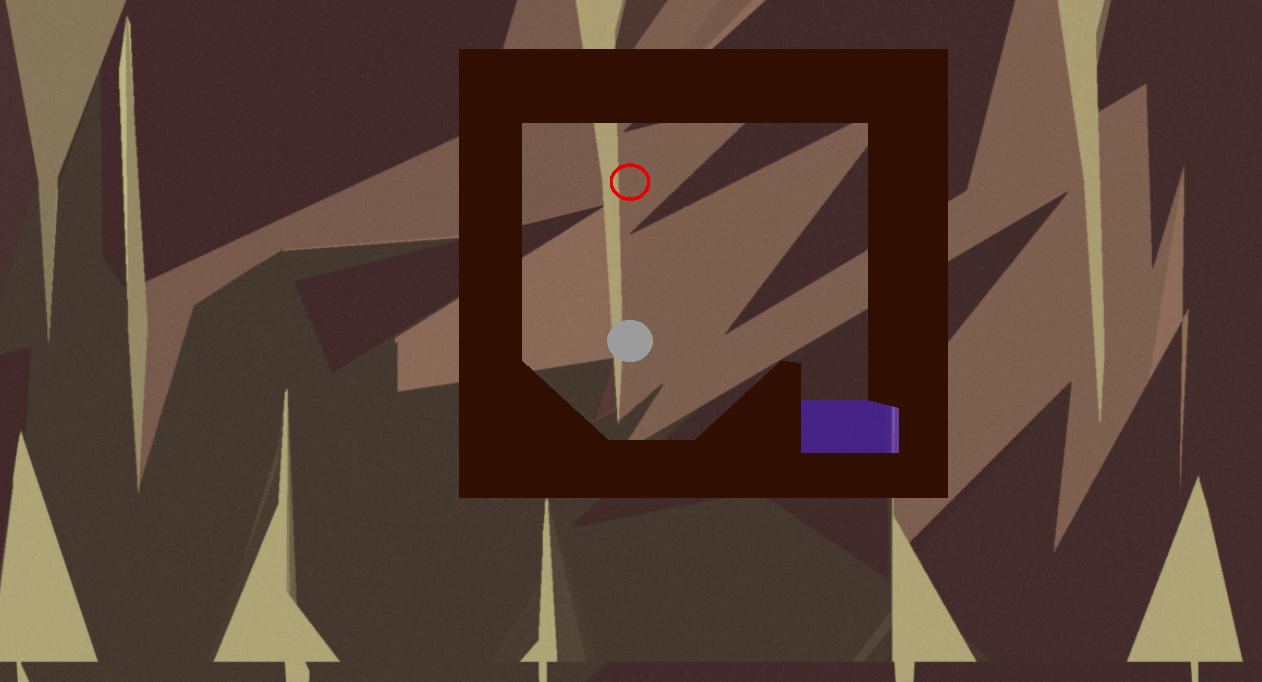

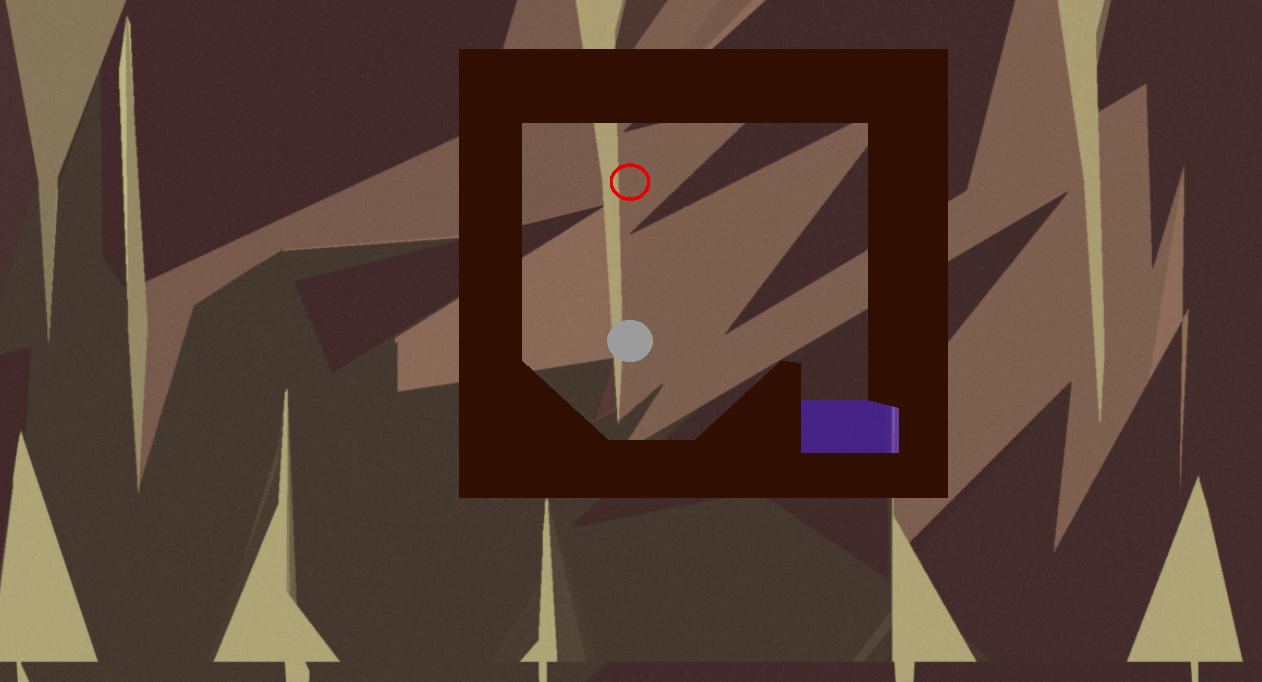

…and of course a spawn and finish point. The ideas was to have the player progress to the finish point using the tiles surrounding them to move, only being able to roll left and right. However, I found that in playtesting, even the simplest of vertical levels proved challenging. This led to me implementing up and down movement, and a boost button – in this way, you could if you desired fall faster or slow your descent for more precise landings, and shoot in a direction every few seconds. The floaty-ness brought about by this bordered on the precipice of satisfying and frustrating. Taking that in stride, I decided this game, like many frustrating platformers before it, was going to revel in its devilishness, and theme it around being trapped in… well, hell.

With those modelled and codified, I moved onto their events. I found while hard-coding some test levels that the unintended behaviour of some tiles actually increased my options when donning my content-developer hat – for example, upward-facing flippers would still propel you upwards if you were touching the side of them, meaning I could create Sonic-esque speed-tunnels. Additionally the exit was originally a hole that would teleport you back to the spawn position, to make testing easier. However, by adding multiple holes to a level, I essentially created traps. This was a convenient idea, because it dealt with loss-of-progression without having to implement a life system (or enemies), and they ended up becoming extremely important.

Most of the gameplay programming came in the form of defining new gameplay entities and how they should react in certain circumstances. The actual innards of the gameplay subsystems only served to monitor win conditions and convert input events into movement events.

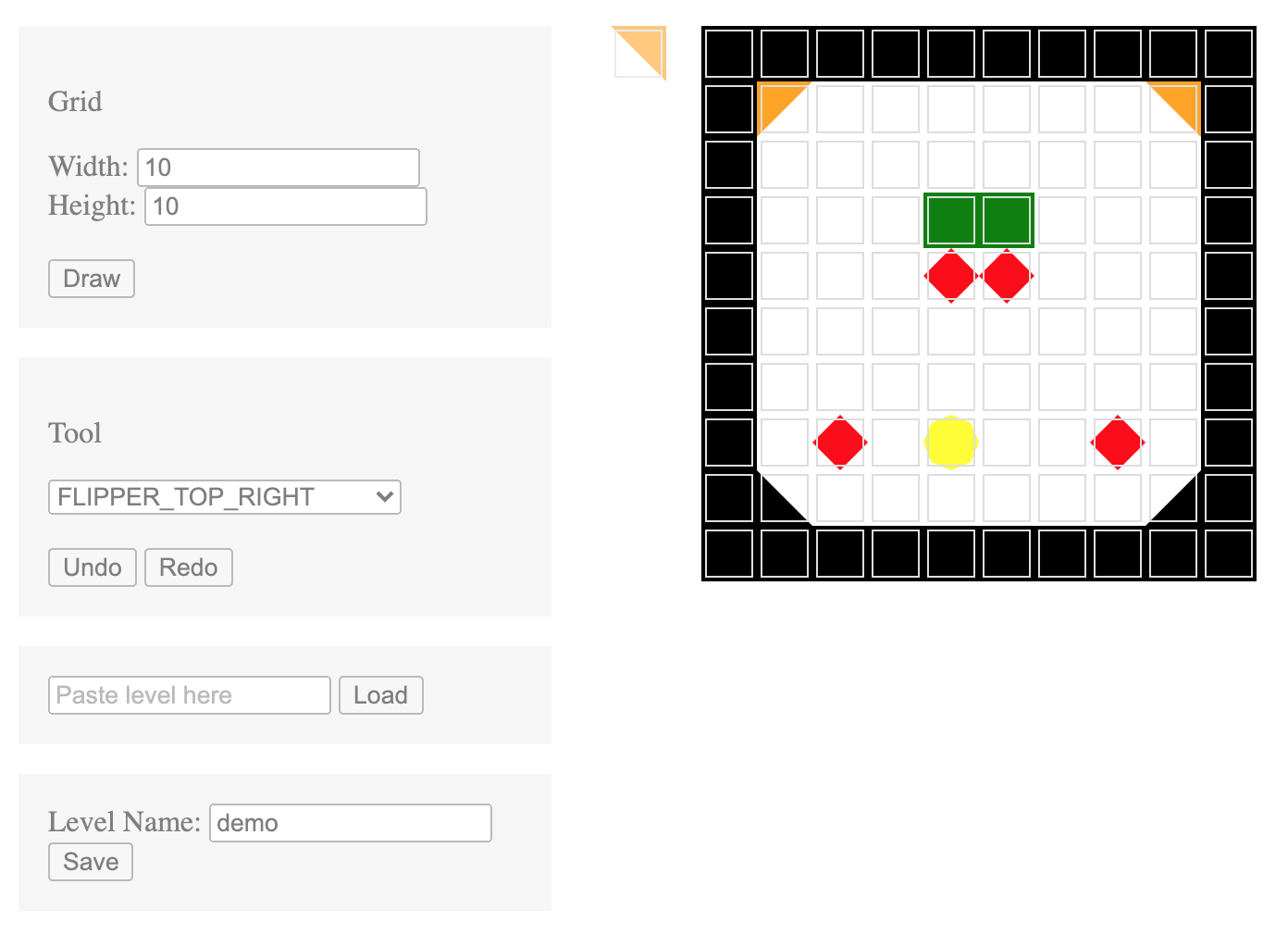

At one point, I got sick of manually typing out my levels, and caved to the internal pressure of creating a level editor. I’m quickest at prototyping in Javascript, so by happy coincidence I was able to put this online for players to use more easily. That could be a write-up of its own, so I’ll move on.

Finally, with some actual, fiendishly hard content, and a very generous hour or so left before my midnight deadline, I added some nice UX touches: I made the shader of the pinball change to reflect the state of its boost timer. I added a splash screen indicating the controls, and an ending screen to throw up after the ten shipped levels were hit. It wasn’t a graphics design course, but I’m fairly confident with Photoshop, and Dafont did me justice.

Playtesting

THIS IS THE BEST. Seeing players smile and swear because of something you made is A-M-A-Z-I-N-G.

Back-patting aside, this is a really crucial step. Through play-testing, I was able to see the impact of the small, seemingly innocuous decisions I’d made along the way as they snowballed into individual gripes and glee. Some pick things up quicker than others. Some don’t at all, and you wonder if you’ve failed to some extent as a designer.

Remarkably, it never crashed, however it did stutter noticeably when switching levels, especially if the players were progressing quickly. Some found loopholes in my system, and kudos to them – one player realised that if you repeatedly held down to speed your descent, then held up as you bounced, you could slightly increase your momentum ad infinitum, meaning not only were they able to effectively cheat, but they were able violate the first and second laws of thermodynamics.

I also found it interesting to see how my peers had approached the same problems in vastly different ways – some focused more-so in specific areas. One enterprising individual made more of a NVidia-style raytracing showcase than a game, one focused on local-multiplayer controls, one focused on intuitive 3D camera controls, and another’s simple shape-puzzler was underpinned by a procedural generation algorithm.

In the future, I plan on investigating networking, more sophisticated graphics capabilities (particle effects etc.), security against data-mining (my friend replaced all of the textures with pictures of my face), and a more conscious adherence to design patterns.