Resources

Inspiration

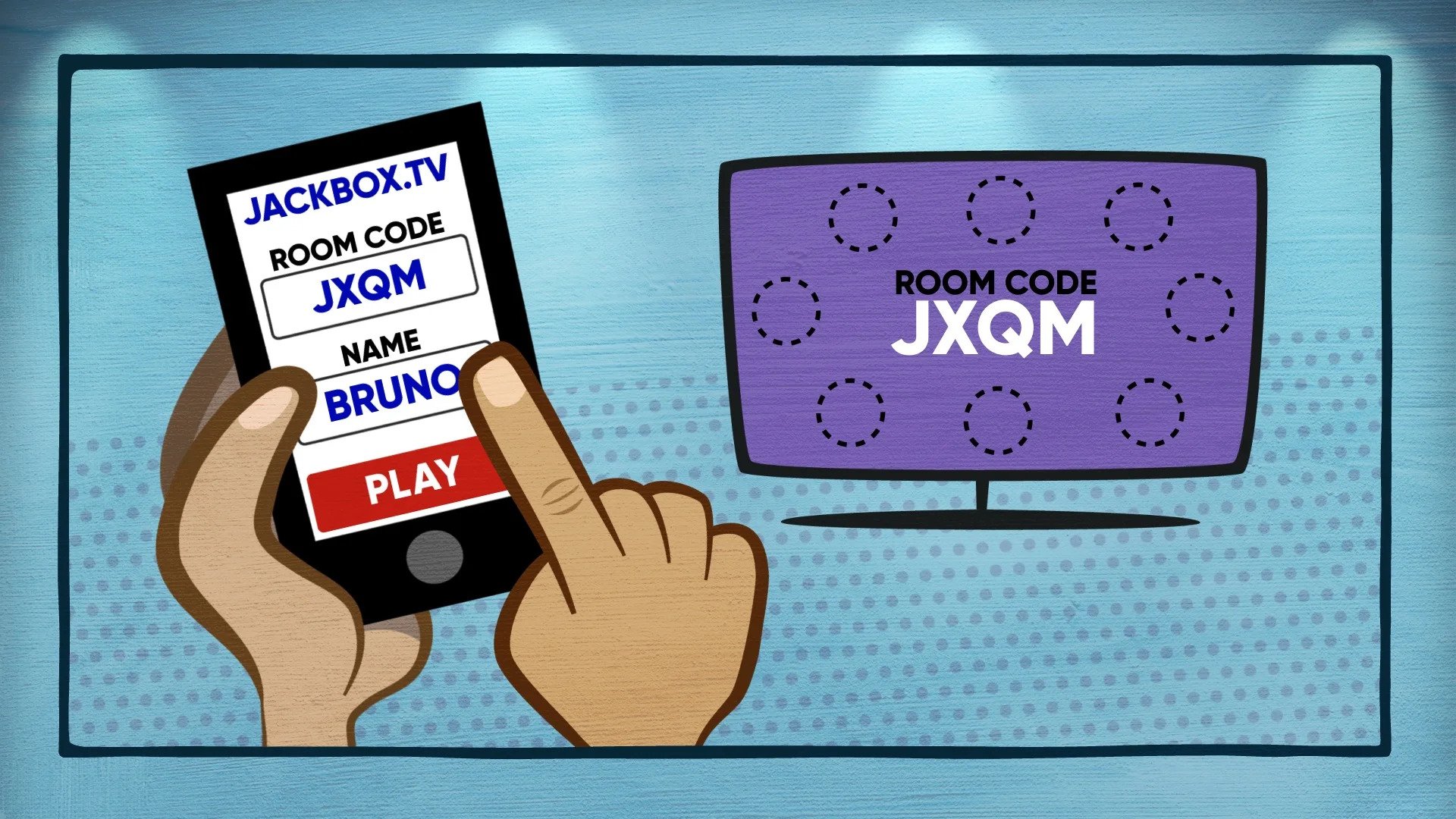

I wanted to jazz up the 'pub quiz' format I'd gotten tired of during the Covid lockdowns over the past few years, and reduce the burden on a quiz host - no more messing with Powerpoints! I took inspiration from Jackbox games and the way they present their apps to players: a game client married to a separate spectator/host control view intended to be livestreamed.

Jackbox games are highly accessible as they can be joined on any device with a web browser and internet connection

Design & Iteration

Managing my tasks on Trello, I did lots of play-testing with friends before settling on a winning quiz-show formula. My mission statements were to:

- Accommodate players who might not know the answer at all

- Prevent the game ending too-early

- Ensure winners didn't do so by a huge margin

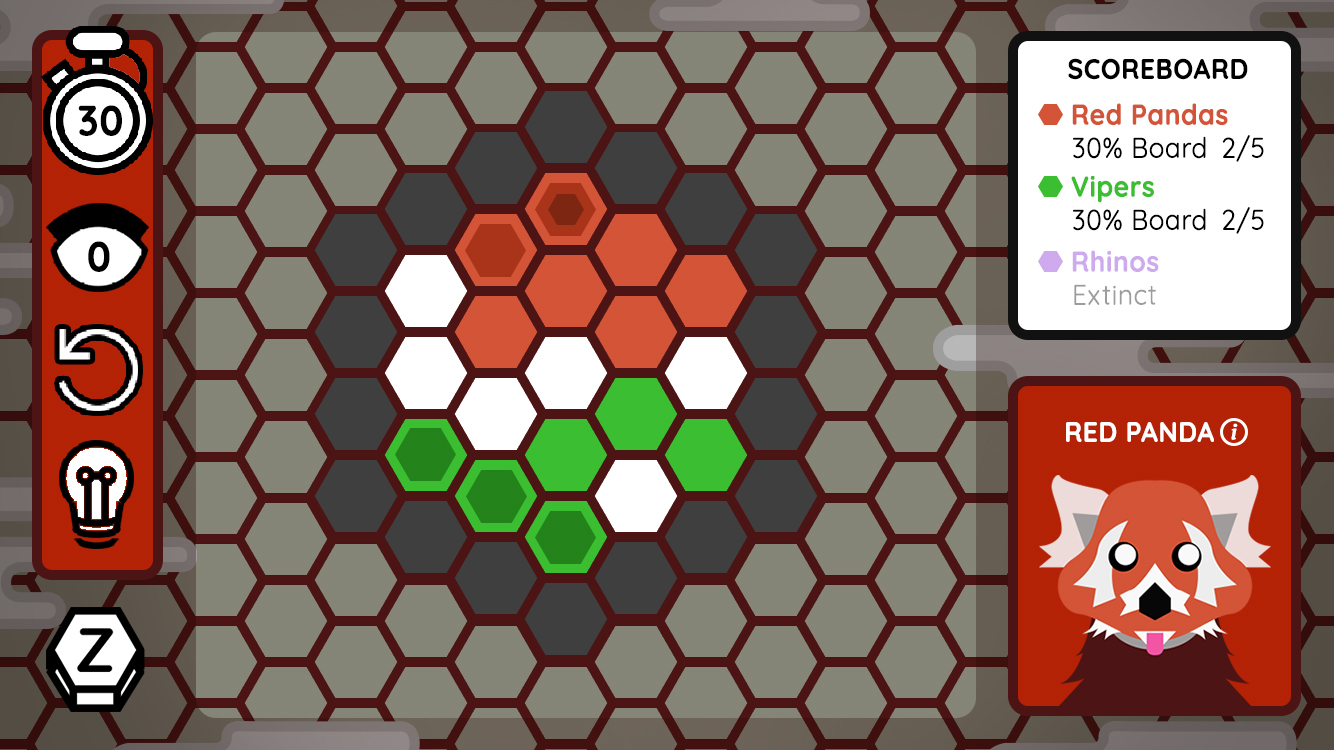

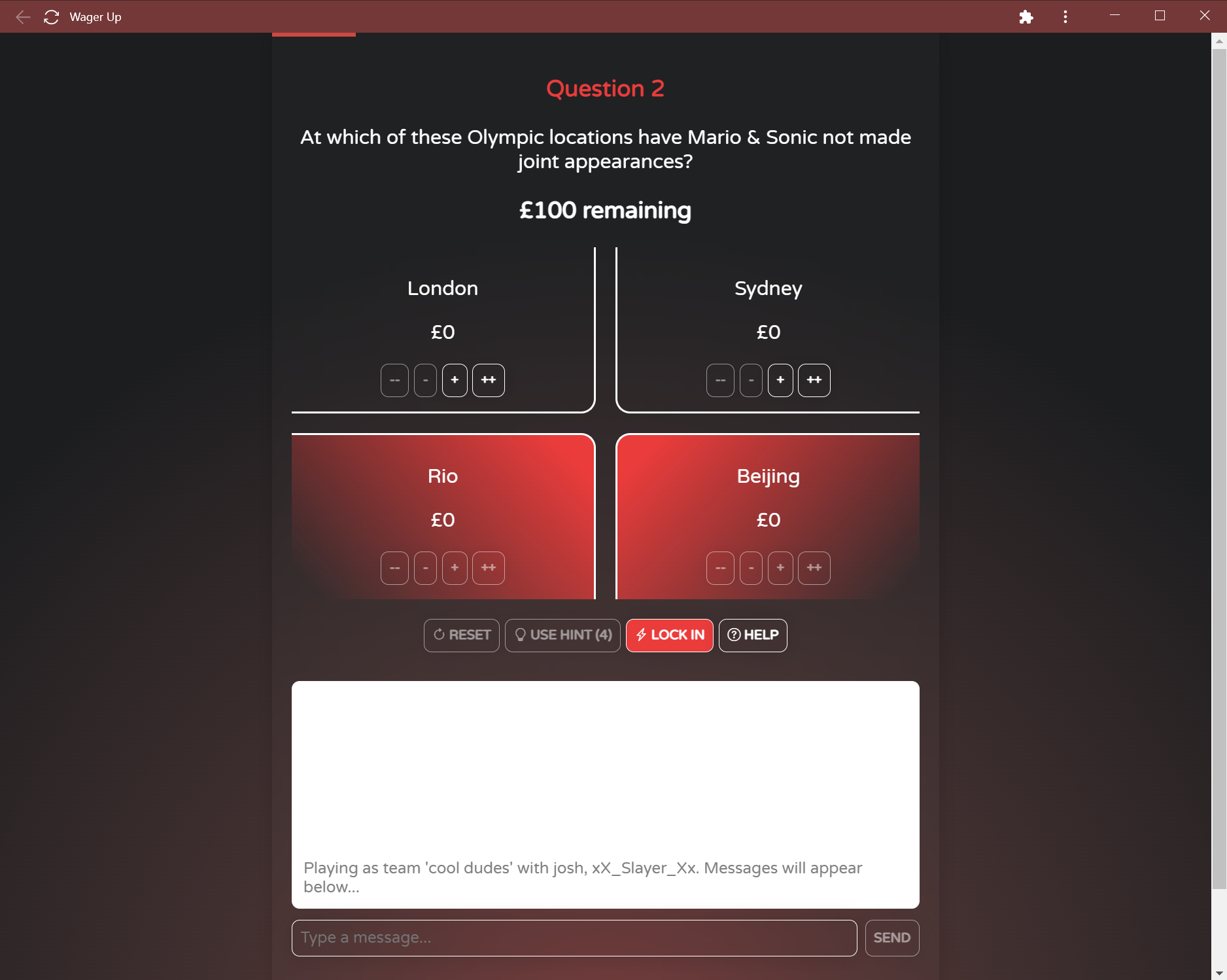

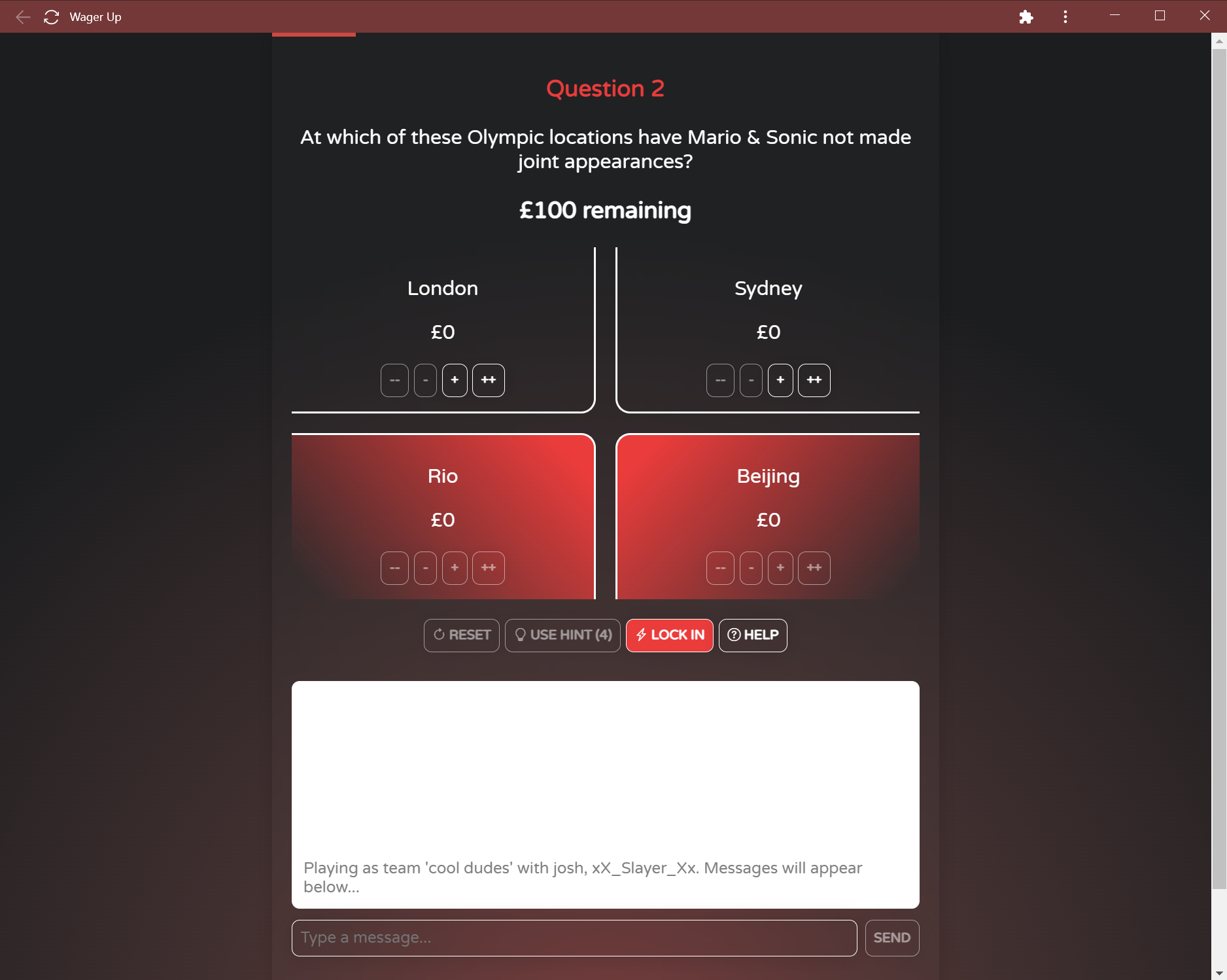

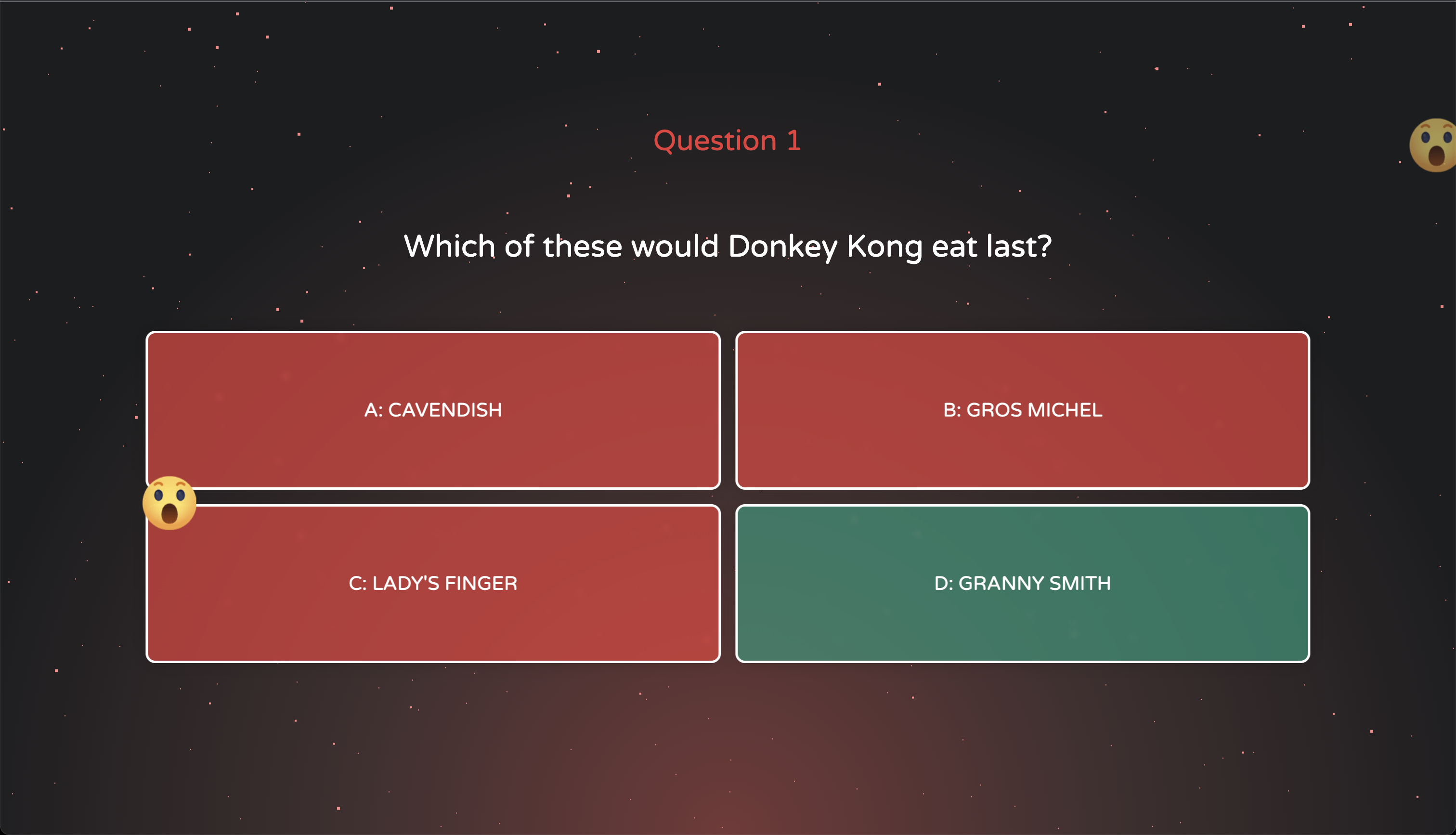

The justification for these goals was primarily that it's not fun to be part of a quiz where you don't feel like you can contribute. Therefore, I wanted the game to feature team-based multiple-choice wagering - options could be written kindly to lead players to a more likely subset of answers (e.g. one obviously wrong, egregious option per-question). I also wanted to create a spectacle whereby hosts could pause for dramatic effect in-between questions and create a narrative from the game's events so far: "Team One have fallen below Team Two this round, who used their hint expertly!". I was essentially reverse-engineering the emotional pillars of the game - fairness, competition and frivolity.

I initially copied the format of 'The 100k Drop', but found that if all teams went all-in on an incorrect answer, the game would end without getting to see all the questions, meaning it'd be hard to predict - I didn't want people to commit an hour of their time, to attending a quiz only to leave disappointed. So, I experimented with the idea of wagering being additive - you could win money, but not lose any. There, I encountered the issue that players doubling their money exponentially would win by several million pounds over the course of a 20 question quiz.

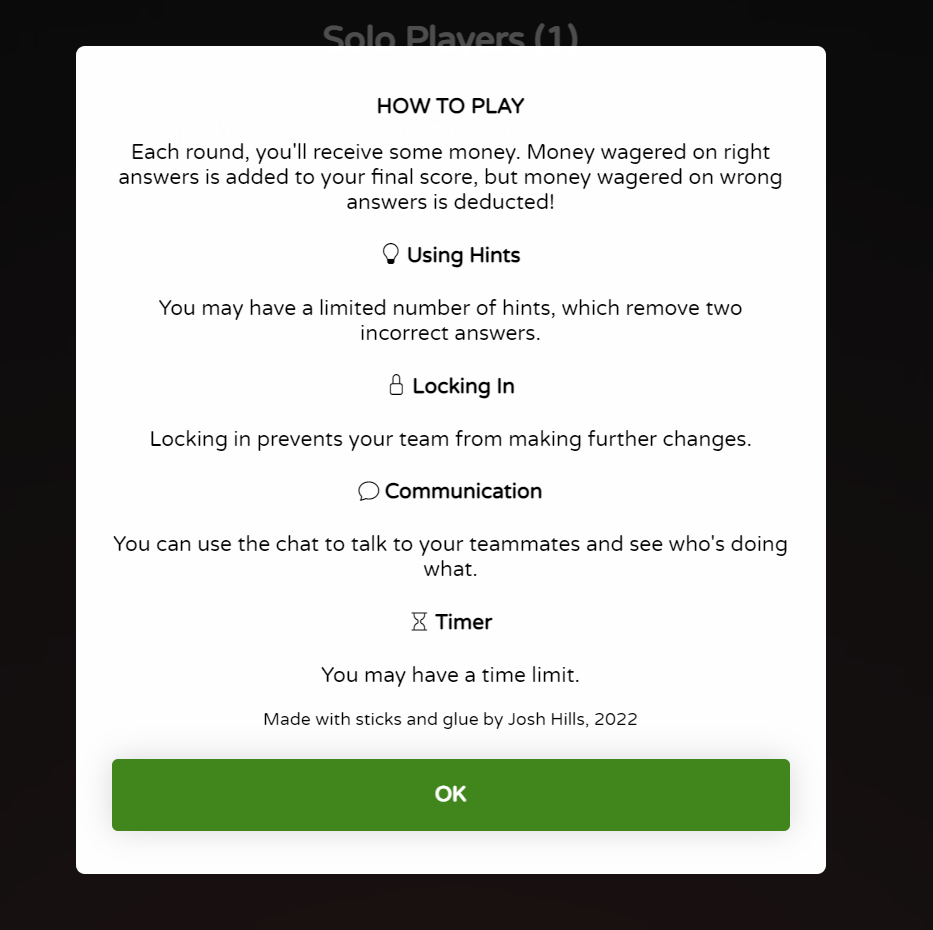

Eventually, I decided that each round, each team would receive a limited pot of money to wager with - any money not spent would disappear, any spent on incorrect answers would be deducted from their final score, and any spent on correct answers would be added to their final score. In addition to meeting my criteria, the benefit of this format was that there could be a deterministic maximum potential score - 50k per question over 20 questions means theoretically you can win a nice round £1 million.

The final rule-set explained to players in a modal dialog

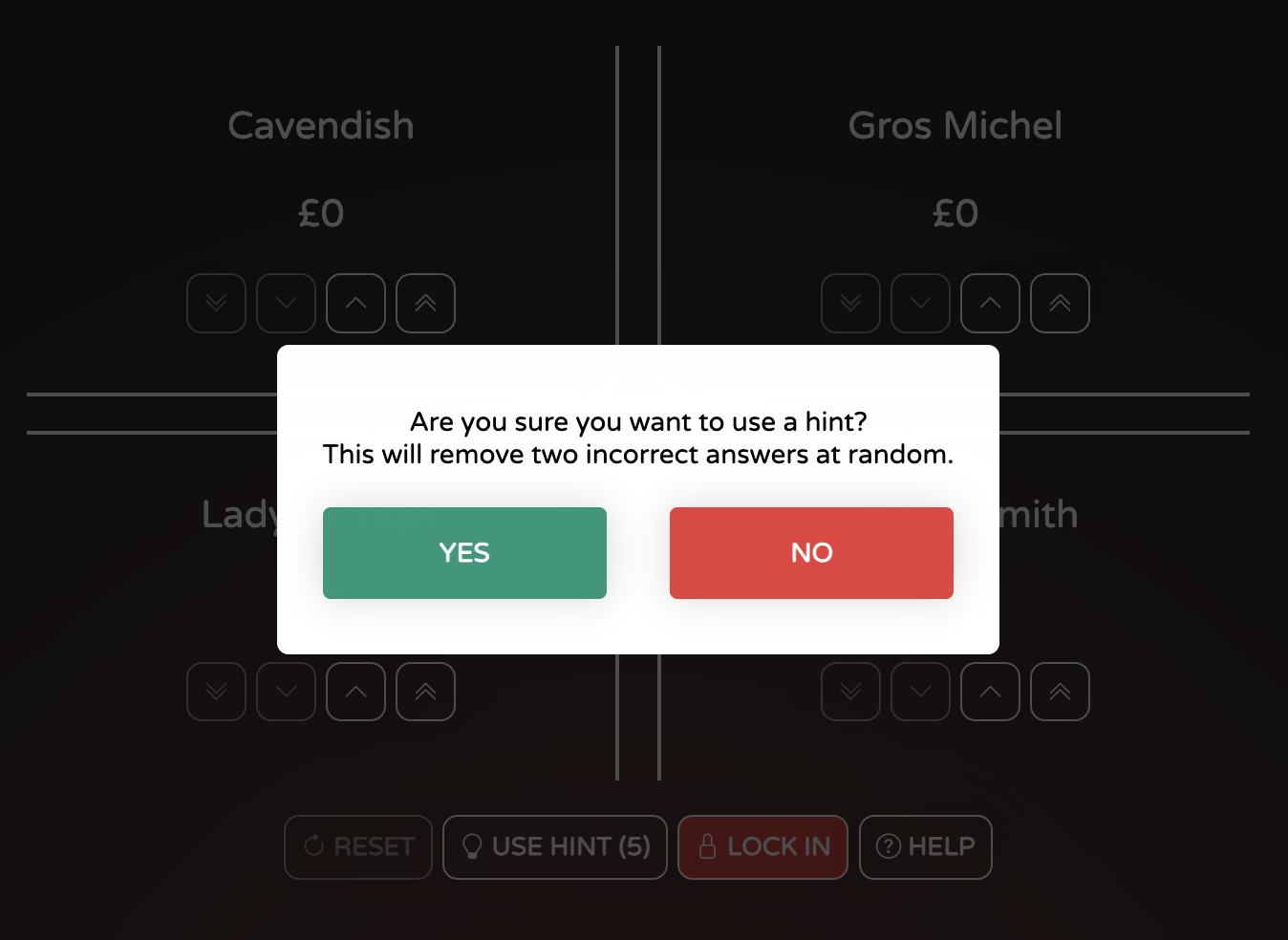

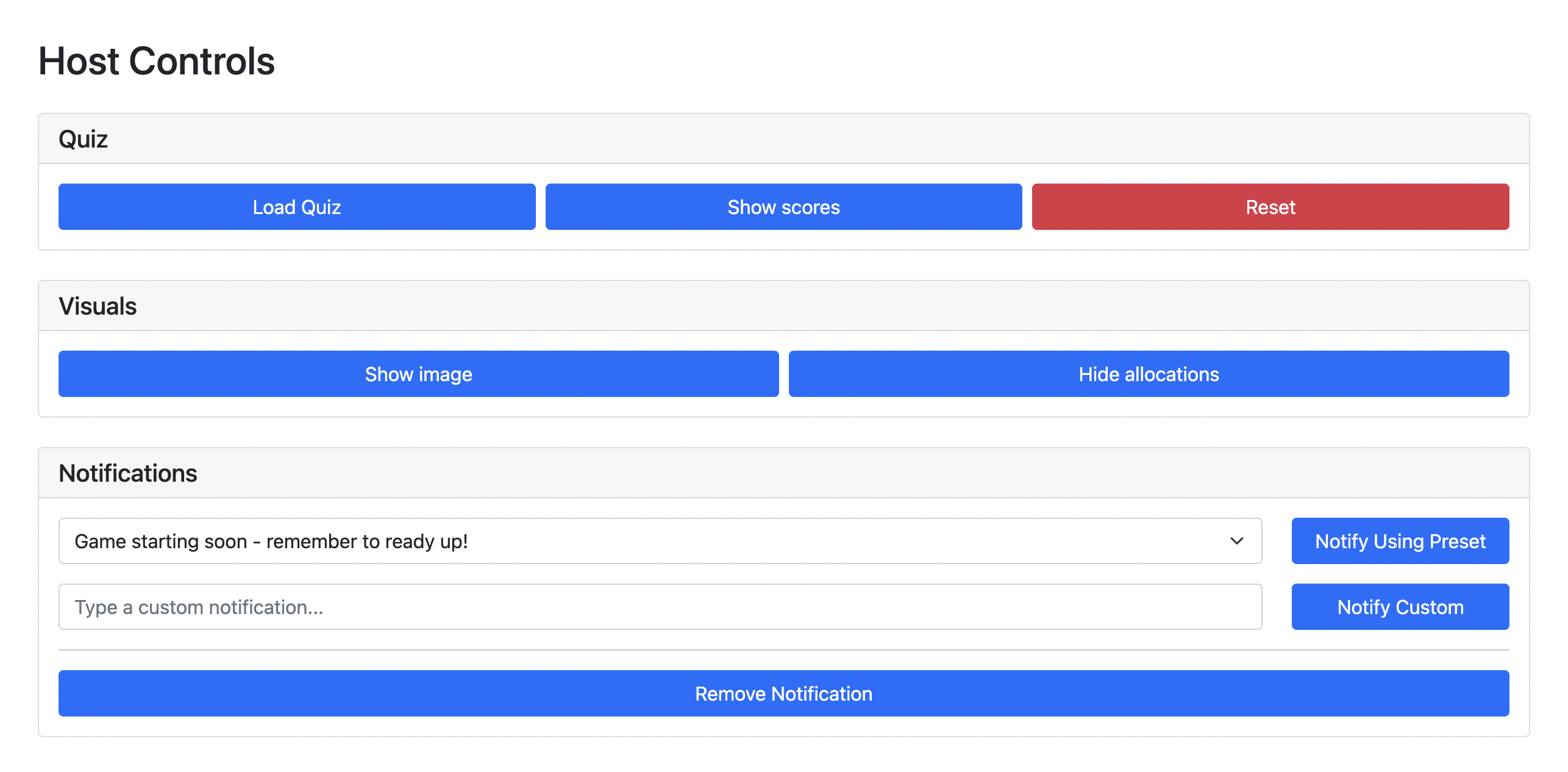

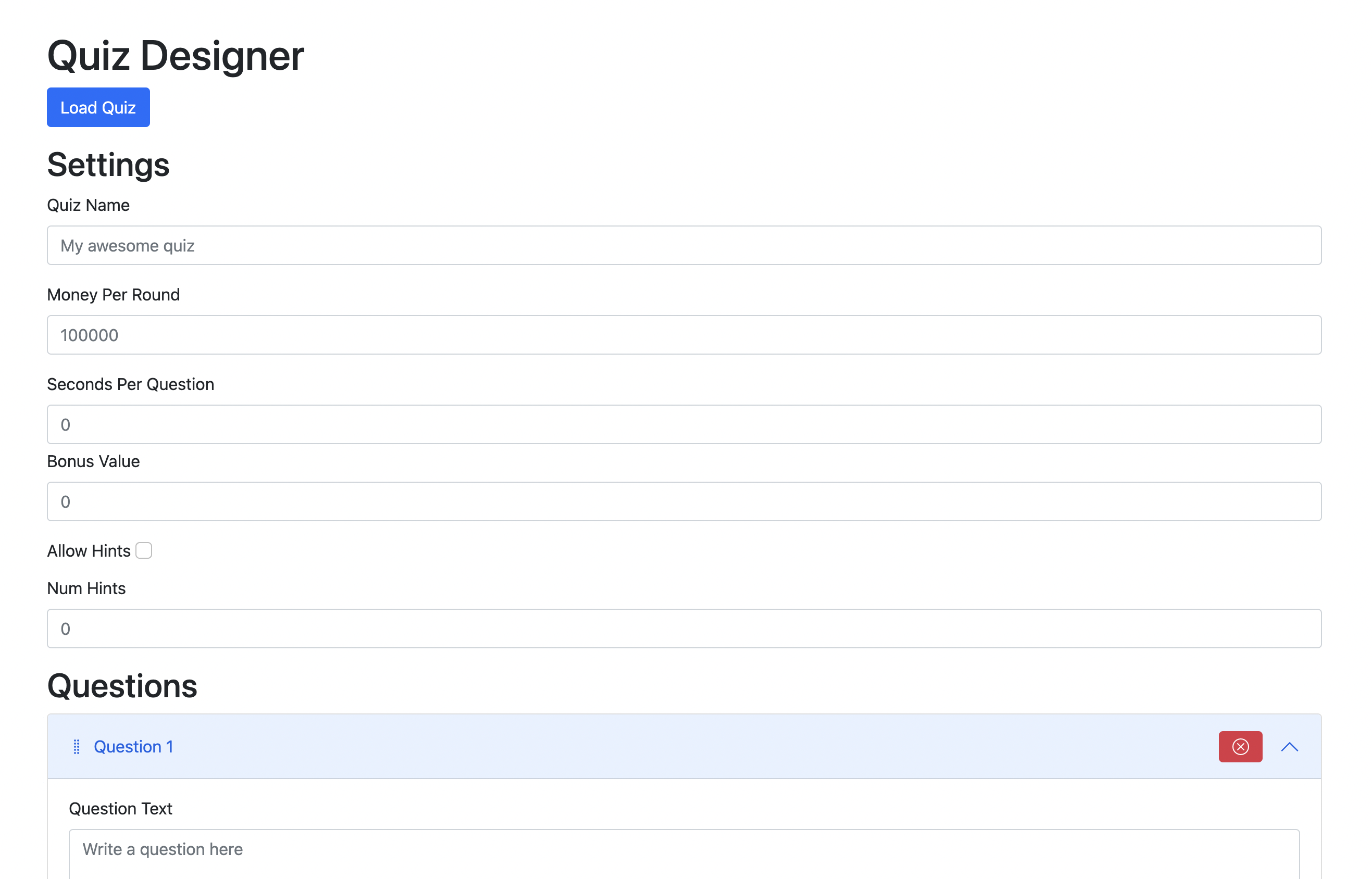

I embellished this with additional UX and quality-of-life features that were borne of testing my own game hundreds of times, and friends chiming in with their own ideas: a hint system to 50/50 the answers, a chat system to enable teams to communicate outside of VoIP, a countdown timer to constrain the length of the session, a quiz designer (wizard) to tune variables such as quantities of money and the questions themselves, an achievement system to still reward 'losers', a notification system to allow hosts to send players text messages, and, of course, an emote system so hosts could see real-time feedback from players.

Each of these features are microcosms of the same features in larger online games, which is my wheelhouse. Each one presents a different set of challenges - thanks to the scope of this project, I could simply reload, test, see what was wrong, and then fix it: "Now that I have a chat system, what happens if I send too many messages? Ok, I probably need to create a FIFO system and limit it in order to prevent the client from crashing". I acted as my own QA, doing bug-driven development.

Technology

Re-use

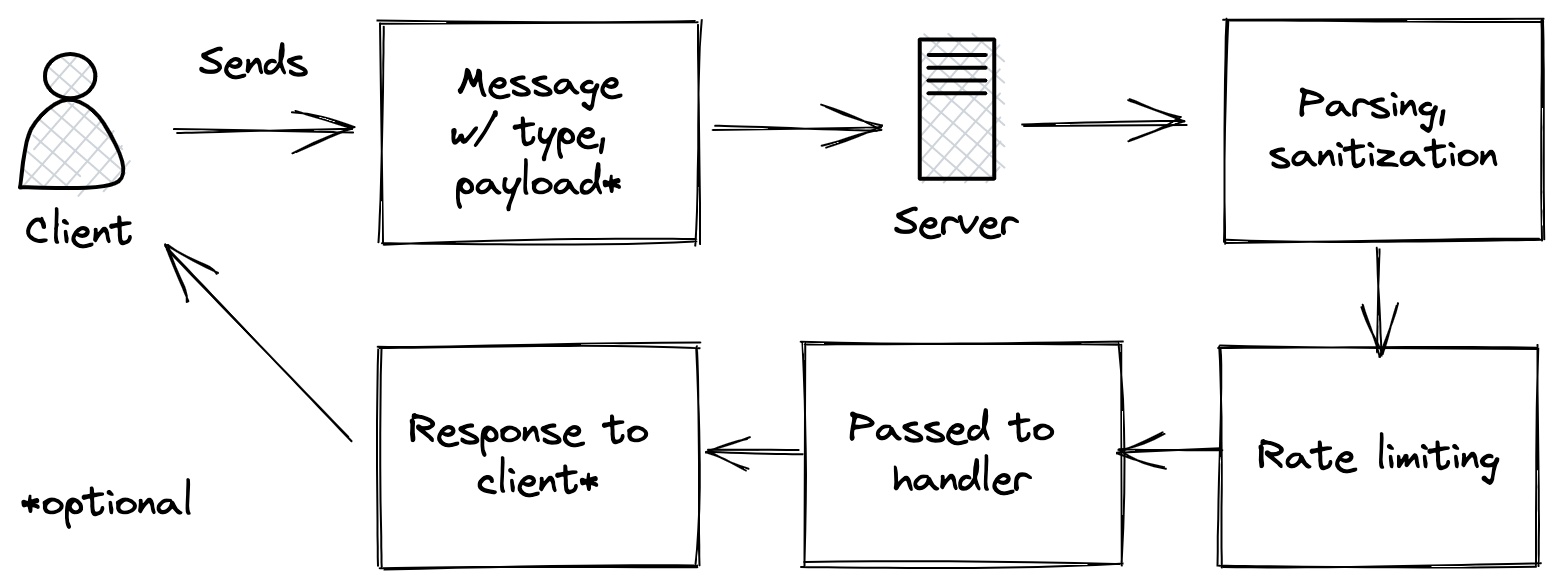

I chose Websockets as the underlying technology as, piggybacking off HTTP/IP, they support frequently sending/receiving messages from clients without needing to establish new connections or guarantee a response like RPC. This meant I could host a resilient, authoritative server, and create a custom marshalling library (I chose JSON for simplicity), in order to re-use the same stack for other, similar projects, with the only thing changing being the specific message schema and set of handler functions relevant to each.

A high-level overview of the message processing solution - the main perk being that the server and player entities can be swapped, as clients use the same library to rate limit and register handlers to messages originating from the server

Lobbies

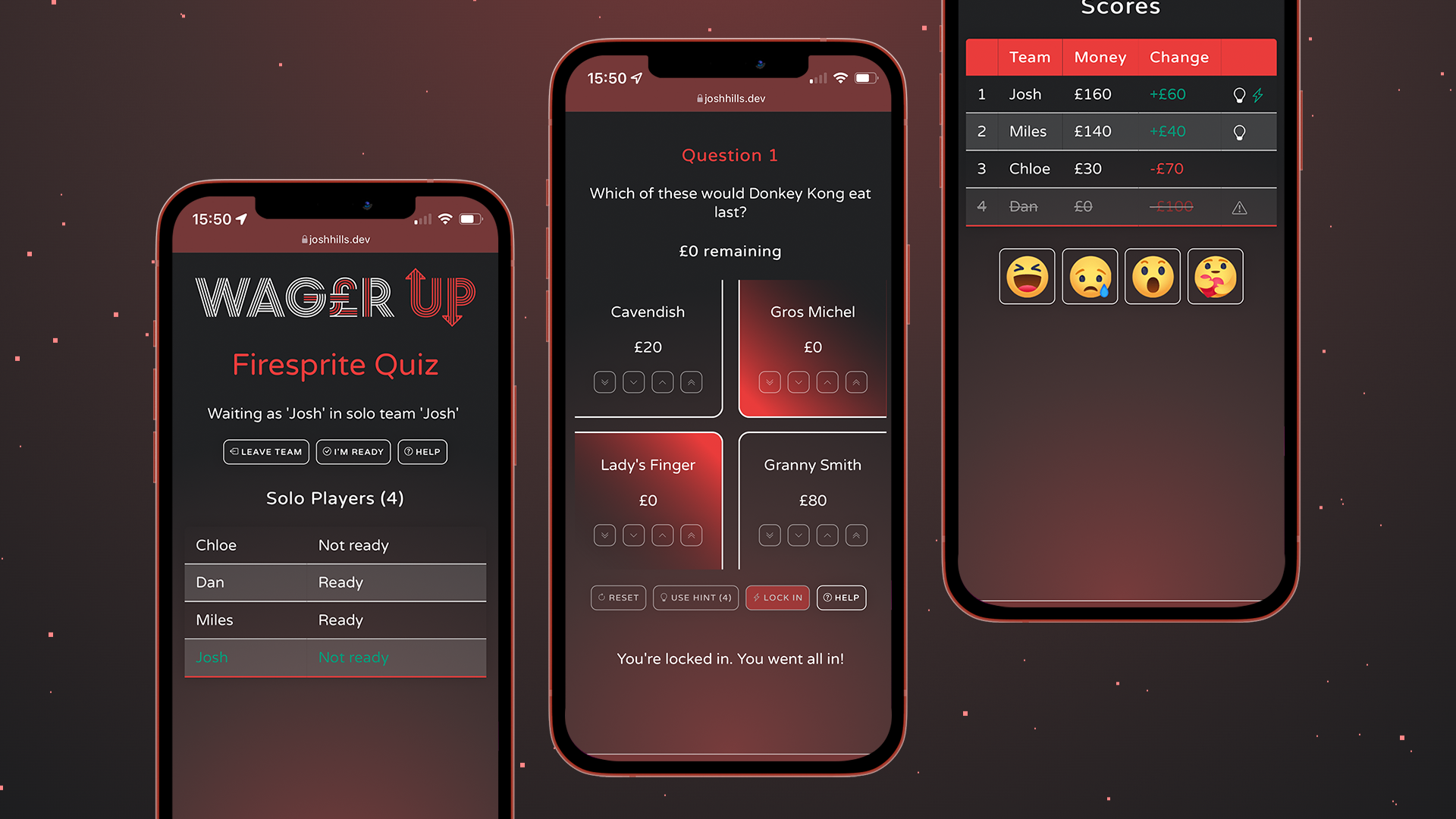

The biggest problem to solve was ensuring that people could join the quiz easily. Matchmaking and managing teams is generally the driest and most important topic of online games, since it's the player's first introduction to the game and an unpleasant experience can lead to a higher bounce-rate and burden on organizers. I managed to create a system whereby players could create a team, or play solo - solo players are simply part of their own team, which is marked as solo, to create consistency in the model.

The team structure and ample buttons allow for late joiners and team-switches, which again prioritizes the host's workload by giving players the option of self-service

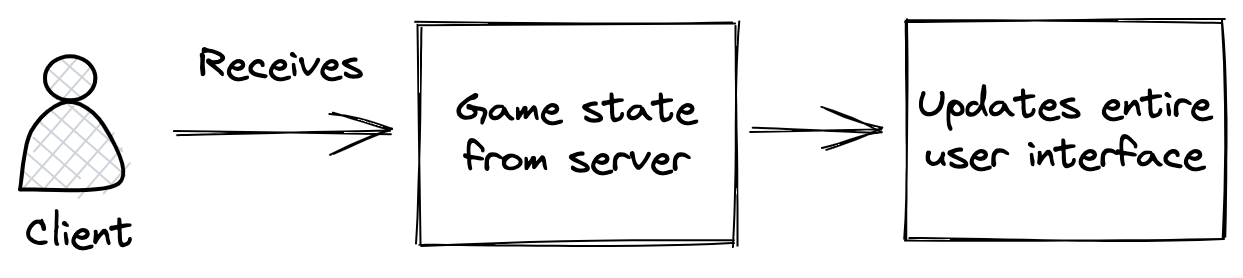

Managing State

After an initial handshake with the server, a client is assigned an ID to cache so that they can refresh their page without breaking the link to their player object. Security for the host view is done via obscurity (extra connection parameters), which worked for my purpose. All clients are sent the game state when it changes, if the change pertains to them, and they are only given what they are allowed to see. With the time I had, I failed to create a system whereby players would initially receive the whole state, and then only receive diffs to that state, meaning payloads are larger than they need to be. Nonetheless, this allowed me to massively simplify the code that updated the DOM, and meant that I could forego relying on an SPA technology like ReactJS.

Since not all elements of the UI will actually be modified every time the game state is received by the client, this is a surprisingly performant, if brittle, solution

Hosting & Load Testing

I hosted the mini-lithic app on the same DigitalOcean droplet as my website. I containerized it using Docker Compose, which is accessed indirectly through an Nginx proxy-pass. This means it can use the wss protocol, while keeping a consistent URL structure with the rest of the site.

I wrote a script to automate connecting n players to the app and, based on the game state, simulate messages. I found that, once I had implemented a sensible rate limiting solution, and kicked clients that provided unexpected input, a single server on paltry virtualized hardware could cope with up to 200 players before experiencing visible latency, which satisfied me.

Heartbeats & Clocks

In order to prevent connections being closed by the server, I implemented a simple ping-pong system. Much later, I realised that I had a clock-synchronization problem - each client might have their own, slightly different understanding of the current time, meaning I couldn't rely on each client to manage their own timer for each question. I solved this by bundling the server's current time with each heartbeat, so the client could figure out their average latency to the server and adjust accordingly - this interpolation seemed to work really well, which was satisfying to see!

Collaboration

I pitched the idea to my producer (and eventual co-host) Dan Jones, and people engagement manager Chloe Sinclair - they loved the idea, and I was super grateful for their enthusiasm. I also recruited the talented Miles Rodyakin as a graphics designer, as there was suddenly a deadline and ergo motivation to polish and put the whole thing into production.

This also meant I got to engage in a bit of marketing, communicating about the project in a newsletter and creating some assets to share:

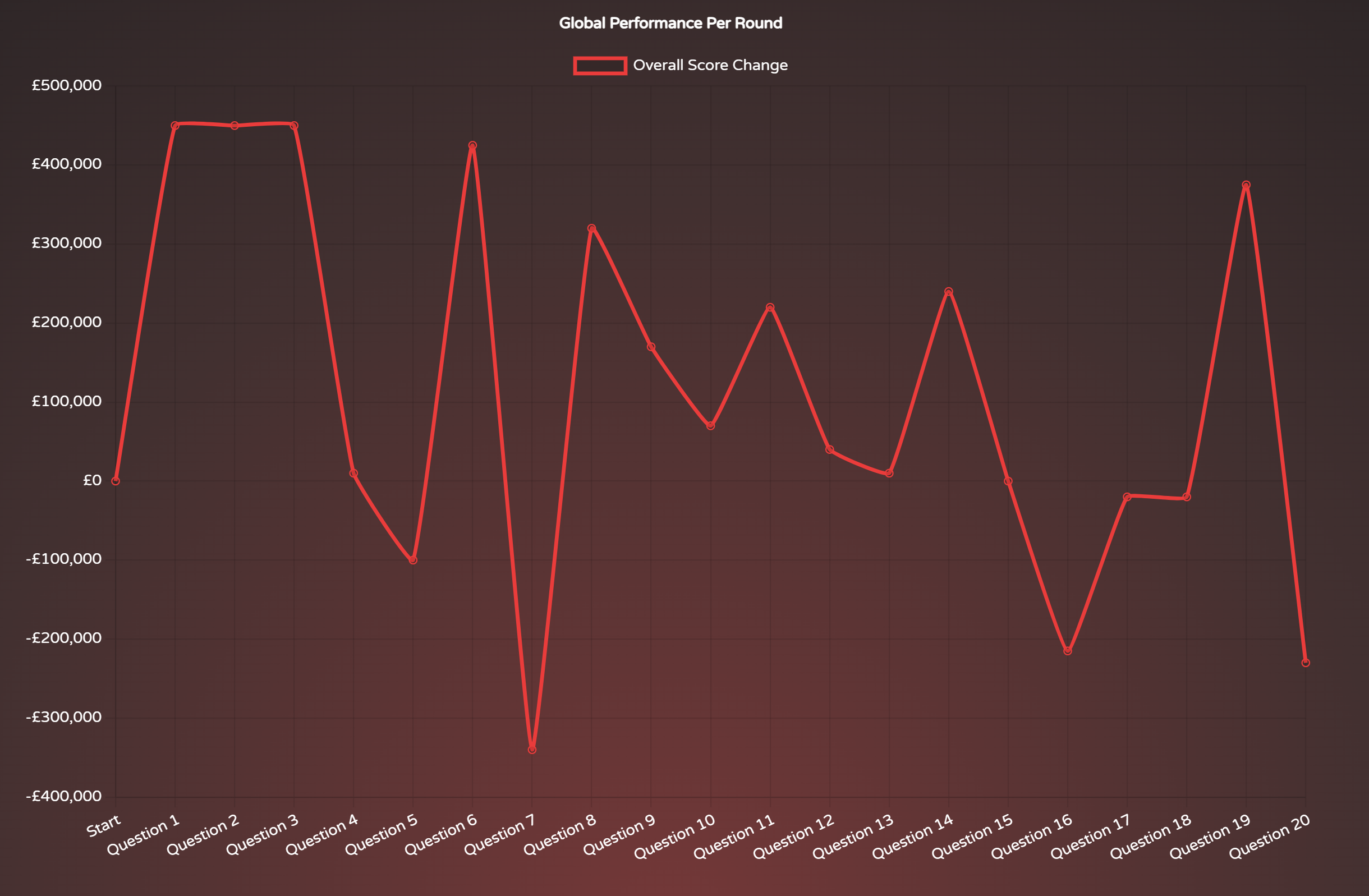

The stakes were pretty low, but I was nervous nonetheless. There was a single input bug on-the-day, but otherwise it went smoothly with a real cohort of ~30 players. I created a survey to ask for feedback, which received positive reviews and some feature requests. Having also provided a privacy policy, I was able to collect and graph data from the session in order to understand how they responded to each question.

Interactive graphs from this quiz show which questions were difficult and which were easy